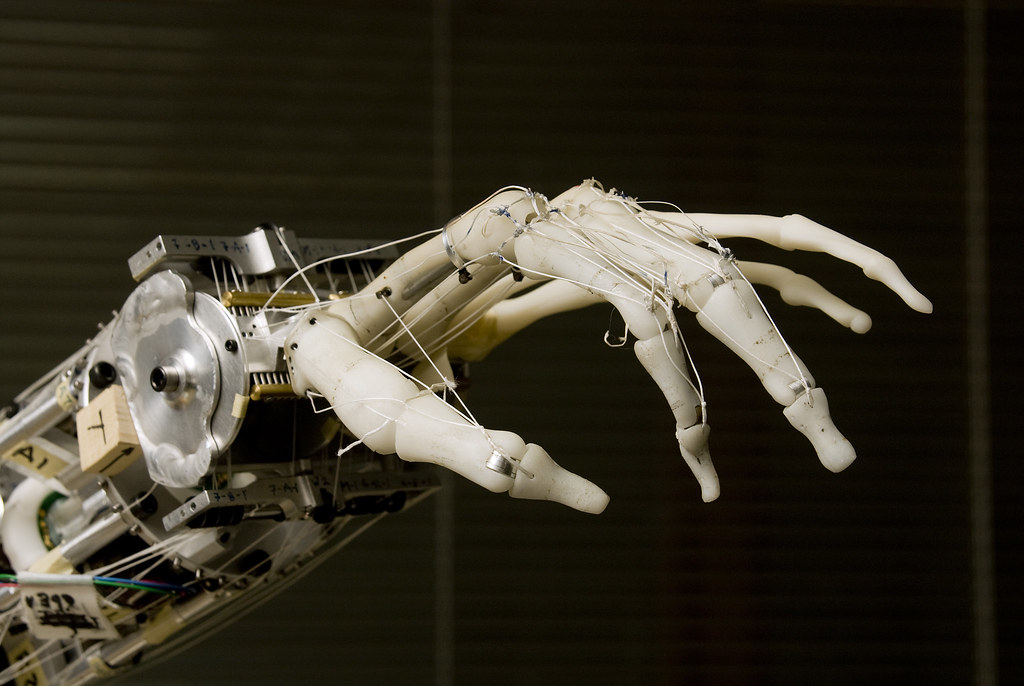

The e-skin is called Asynchronous Coded Electronic Skin (Aces), and is inspired by the human sensory nervous system. The e-skin detects signals and differentiates physical contact like a human being. But unlike nerve bundles in human skin, the electronic nervous system is made of a network of sensors, connected with a single electrical conductor.

The device is made up of 100 small sensors and is about 1 sq cm (0.16 square inch) in size. The researchers say it can process information faster than the human nervous system, is able to recognise 20 to 30 different textures and can read Braille letters with more than 90% accuracy.

“So humans need to slide to feel texture, but in this case the skin, with just a single touch, is able to detect textures of different roughness,” said research team leader Benjamin Tee, adding that AI algorithms let the device learn quickly.

“So humans need to slide to feel texture, but in this case the skin, with just a single touch, is able to detect textures of different roughness,” said research team leader Benjamin Tee, adding that AI algorithms let the device learn quickly.

A demonstration showed the device could detect that a squishy stress ball was soft, and determine that a solid plastic ball was hard.

Tee said the concept was inspired by a scene from the “Star Wars” movie trilogy in which the character Luke Skywalker loses his right hand and it is replaced by a robotic one, seemingly able to experience touch sensations again.

The technology is still in the experimental stage, but there had been “tremendous interest”, especially from the medical community, Tee added.